The Imperative of Speed in AI Servers

The demand for computing power in artificial intelligence, particularly for Large Language Models (LLM) and generative AI, is growing at an exponential rate. This drive is not solely about the evolution of GPUs but encompasses the entire hardware stack, from processors to memory and interconnections. To support increasingly complex and voluminous workloads, AI servers must ensure high performance and minimal latency.

In this context, the need for faster AI servers dictates a significant shift in component design and manufacturing. Every element of the infrastructure must be optimized to handle massive data throughput and ensure signal integrity. The overall performance of an AI system is, in fact, the sum of its parts, and a single bottleneck can compromise the efficiency of the entire deployment.

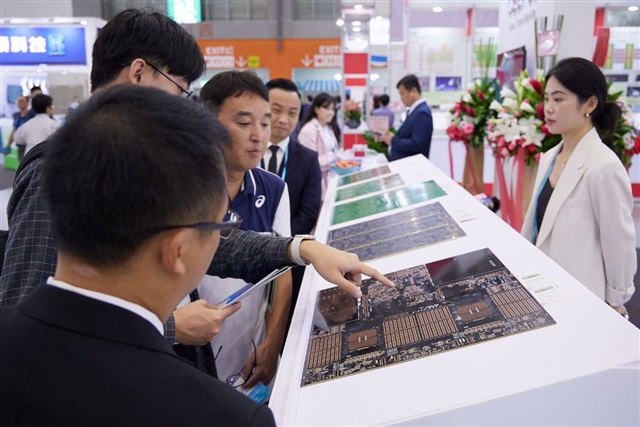

The Crucial Role of Advanced PCB Technologies

Printed Circuit Boards (PCBs) represent a fundamental, often underestimated, element in the architecture of modern AI servers. With increasing operating frequencies and component density, traditional PCBs are no longer sufficient. New generations of AI servers require PCBs capable of supporting higher signal density, maintaining signal integrity at high frequencies, and effectively managing power distribution and heat dissipation.

This has led to the adoption of low-loss dielectric materials, complex multi-layer designs, and advanced routing techniques. Such innovations are essential to support high-speed interconnections, such as the recent evolutions of PCIe and NVLink, which connect GPUs and other accelerators with ever-increasing bandwidth. A PCB's ability to handle these requirements directly influences the speed at which data can be transferred between GPU VRAM and other components, critically impacting the training and inference performance of LLMs.

Implications for On-Premise LLM Deployments

For organizations choosing an on-premise or self-hosted deployment for their AI workloads, the evolution of PCB technologies has significant implications. Investing in servers equipped with advanced PCBs impacts the Total Cost of Ownership (TCO), considering not only initial costs (CapEx) but also energy efficiency and hardware upgrade cycles. More performant, yet also more complex, servers require more sophisticated infrastructure management, including advanced cooling systems, greater datacenter space, and robust power supply.

The choice of cutting-edge hardware technologies is particularly relevant for those prioritizing data sovereignty, regulatory compliance, and security in air-gapped environments. In these scenarios, complete control over hardware and infrastructure is paramount. An organization's ability to effectively implement and manage AI servers with advanced PCBs becomes a critical factor for the success of LLM deployments. For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between performance, costs, and control.

Future Prospects and Challenges

The drive towards increasingly performant AI servers is set to continue, and with it, the evolution of PCB technologies. Future challenges will include miniaturization, the integration of a growing number of components, and the exploration of new exotic materials to further improve electrical and thermal performance. The energy efficiency of PCBs will be an increasingly critical factor as the industry seeks to reduce the carbon footprint of datacenters and the operational costs associated with energy consumption.

In summary, the performance of an AI system is the sum of its parts, and PCBs, though often invisible, are a critical link in the chain. Understanding the impact of advanced PCB technologies is essential for CTOs, DevOps leads, and infrastructure architects who must make informed decisions about LLM deployments, balancing performance, costs, and data sovereignty requirements in a rapidly evolving technological landscape.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!