Un cambio di rotta strategico per SK Hynix

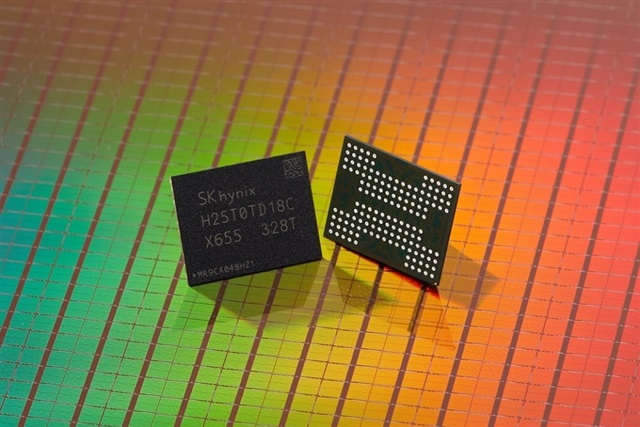

SK Hynix, attore chiave nel mercato delle memorie, ha rivelato una decisione strategica che ridisegnerà la sua produzione di memoria NAND. L'azienda intende destinare oltre la metà del suo output complessivo ai chip di nuova generazione a 321 strati. Questa mossa evidenzia una chiara direzione verso l'adozione di tecnicie di archiviazione più avanzate e ad alta densità.

La memoria NAND è un componente fondamentale per una vasta gamma di applicazioni, dai dispositivi consumer ai server enterprise e ai data center. La sua evoluzione è cruciale per supportare la crescente domanda di archiviazione dati, spinta in particolare dall'espansione dei carichi di lavoro legati all'intelligenza artificiale e ai Large Language Models (LLM).

La tecnicia NAND a 321 strati: densità e performance

L'innovazione nei chip NAND si misura spesso nel numero di strati verticali. Aumentare il numero di strati consente di impilare più celle di memoria in uno spazio fisico ridotto, incrementando significativamente la densità di archiviazione per unità di superficie. I chip a 321 strati rappresentano un passo avanti notevole in questa direzione, superando le generazioni precedenti.

Questa maggiore densità non solo permette di immagazzinare più dati, ma può anche contribuire a migliorare l'efficienza energetica e le performance complessive dei dispositivi di archiviazione. Per i data center, ciò si traduce in una riduzione dell'ingombro fisico e potenzialmente in un TCO inferiore, grazie a una maggiore capacità per rack e a consumi ottimizzati.

Implicazioni per i data center e i carichi di lavoro AI

La transizione verso NAND a più strati ha un impatto diretto sulle infrastrutture IT, in particolare per quelle che gestiscono carichi di lavoro intensivi come l'addestramento e l'Inference di LLM. Questi modelli richiedono l'accesso rapido a enormi dataset, e la velocità e la capacità dello storage sono fattori limitanti critici.

Per le organizzazioni che valutano deployment on-premise, l'adozione di storage NAND ad alta densità è fondamentale. Permette di costruire infrastrutture locali più compatte ed efficienti, garantendo al contempo la sovranità dei dati e il controllo diretto sull'hardware. La capacità di gestire grandi volumi di dati localmente, con Throughput elevati, è essenziale per ottimizzare le pipeline di AI e ridurre le latenze. AI-RADAR offre framework analitici su /llm-onpremise per valutare i trade-off tra soluzioni self-hosted e cloud, considerando fattori come TCO e requisiti di performance.

Il futuro dello storage per l'intelligenza artificiale

La mossa di SK Hynix riflette una tendenza più ampia nel settore delle memorie, dove la ricerca di densità e performance superiori è incessante. Con l'avanzamento dell'intelligenza artificiale e la proliferazione di modelli sempre più complessi, la domanda di soluzioni di storage all'avanguardia continuerà a crescere esponenzialmente.

L'innovazione nella tecnicia NAND, come quella rappresentata dai chip a 321 strati, è un pilastro per lo sviluppo di infrastrutture capaci di sostenere la prossima generazione di applicazioni AI. Questo progresso non solo migliora le capacità di archiviazione, ma apre anche la strada a nuove possibilità per l'elaborazione dei dati e l'efficienza operativa nei data center di tutto il mondo.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!