The AI Server Boom and the Demand for Advanced Cooling

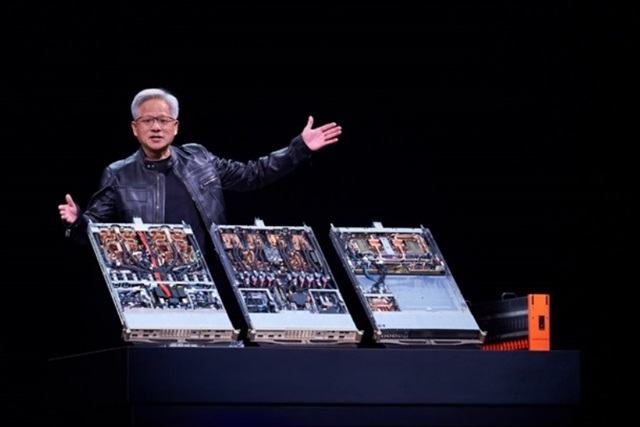

The exponential expansion of artificial intelligence, especially Large Language Models (LLMs), is generating unprecedented demand for high-performance servers. These systems, equipped with an increasing number of powerful GPUs, are the beating heart of AI training and inference operations. However, their high computational capacity translates into significant heat generation, making thermal management solutions a critical and often underestimated component of the infrastructure.

In this scenario, Taiwanese companies specializing in thermal solutions are experiencing a period of strong growth. According to market analyses, players like AVC and Auras are positioned to lead a wave of expansion by 2026, capitalizing on the need for increasingly sophisticated cooling systems for next-generation AI servers. This trend underscores the strategic importance of the supply chain for AI hardware.

The Crucial Role of Cooling in On-Premise AI

For organizations choosing to deploy AI workloads, including LLMs, in self-hosted or air-gapped environments, cooling efficiency is a decisive factor. GPUs such as NVIDIA A100 or H100, with their high VRAM capacities and computational density, can generate hundreds of watts of heat each. Without adequate dissipation, operating temperatures can exceed limits, leading to performance throttling, system instability, and, in the long term, hardware failures.

The choice between air cooling and liquid solutions, such as direct-to-chip liquid cooling, therefore becomes a key architectural decision. While air cooling is simpler to implement, liquid solutions offer superior thermal dissipation capacity, essential for ultra-high-density servers and for maintaining consistent throughput during intensive training or inference sessions. This choice directly impacts not only performance but also hardware longevity and overall deployment reliability.

Implications for Infrastructure and TCO

The integration of advanced cooling systems has profound implications for the design and management of AI infrastructure. In terms of CapEx, liquid solutions may require a higher initial investment but can also enable greater compute density per rack, optimizing data center space. In terms of OpEx, the energy efficiency of cooling systems directly affects operational costs, particularly electricity consumption, which represents a significant component of the Total Cost of Ownership (TCO) for large-scale AI infrastructures.

Thermal management is not just about preventing overheating; it's also about maintaining optimal temperatures to maximize component lifespan and ensure predictable performance. For CTOs and infrastructure architects, carefully evaluating cooling options is fundamental to balancing performance, costs, and the long-term sustainability of AI deployment. For those evaluating on-premise deployments, analytical frameworks are available on /llm-onpremise to help assess these trade-offs.

Future Outlook and Data Sovereignty

The growth trend of Taiwanese companies in the thermal solutions sector highlights a critical component of the AI technology supply chain. As organizations seek to deploy LLMs and other AI applications in controlled environments for reasons of data sovereignty, compliance, or air-gapped security, the ability to effectively manage the heat generated by hardware becomes an enabling factor.

The availability of robust and scalable cooling solutions is therefore essential to support the widespread adoption of self-hosted AI infrastructures. The thermal solutions market, led by innovators like AVC and Auras, is set to play an increasingly central role in shaping the future of AI deployments, ensuring that the necessary computational power can operate reliably and efficiently, regardless of workload complexity or specific deployment requirements.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!