Viking AI's Growth Amidst Supply Chain Challenges

Viking AI recently announced a 12% increase in its revenues, a figure that underscores the continuous expansion of the artificial intelligence sector and the company's ability to capitalize on this trend. This positive result comes within a context of strong demand for AI solutions, prompting many organizations to invest in dedicated infrastructure, both in the cloud and on-premise.

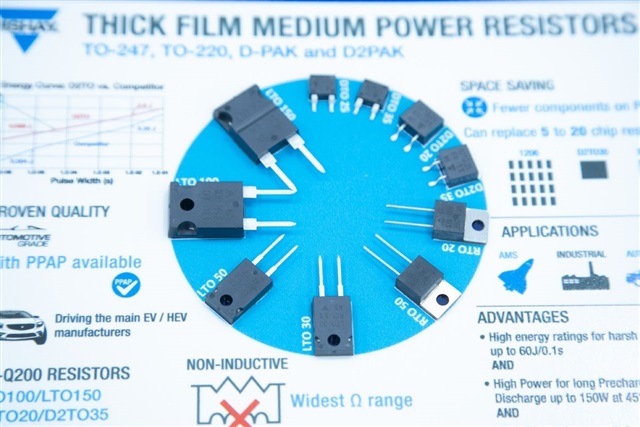

However, Viking AI's success emerges within an industrial landscape characterized by increasing complexities. Alongside the revenue announcement, there is a significant extension in lead times for resistors, which are fundamental electronic components. These lead times have stretched up to 15 weeks, a worrying signal for the entire technological supply chain.

The Impact of Lead Times on Critical Components

The extension of lead times for components like resistors is not an isolated phenomenon but reflects broader pressure on the global supply chain. Resistors, while relatively simple elements, are ubiquitous in almost every electronic device, from servers to embedded systems, and their scarcity can have a cascading effect on the production of more complex hardware.

For companies developing or implementing AI solutions, this translates into potential delays in the manufacturing of motherboards, GPUs, power supply units, and other essential modules. Such delays can, in turn, affect the ability to scale AI infrastructures, both for training Large Language Models and for large-scale Inference. The availability of silicon and other components is a critical factor for maintaining competitiveness and adhering to development roadmaps.

Implications for On-Premise LLM Deployments

For CTOs, DevOps leads, and infrastructure architects evaluating on-premise LLM deployments, extended component lead times pose a direct challenge. The decision to host AI infrastructure locally is often driven by needs for data sovereignty, regulatory compliance, long-term Total Cost of Ownership (TCO) control, and the requirement for air-gapped environments. However, these advantages can be mitigated if the acquisition of necessary hardware experiences prolonged delays.

Strategic planning thus becomes crucial. Organizations must consider not only the technical specifications of GPUs (such as VRAM and throughput) but also the resilience of hardware suppliers' supply chains. This could mean placing orders in advance, diversifying suppliers, or needing to maintain safety stocks, all factors that influence the overall TCO and deployment speed. For those evaluating on-premise deployments, AI-RADAR offers analytical frameworks on /llm-onpremise to assess complex trade-offs between availability, cost, and control.

Future Outlook and Mitigation Strategies

Facing these challenges, the industry is exploring various strategies to mitigate supply chain risks. Some companies are investing in greater vertical integration, producing more components in-house, while others are seeking to geographically diversify their supplier base. Designing hardware with greater flexibility in component usage, allowing for substitution with more readily available alternatives, is another approach.

Supply chain resilience is set to remain a central theme for the technology sector, particularly for AI, which is heavily dependent on specialized hardware. The ability of a company like Viking AI to grow in this context highlights the importance of agile and proactive management of resources and supplier relationships, aspects that will increasingly be decisive for success in the competitive landscape of artificial intelligence.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!