Tesla AI5: A Crucial Step Towards AI Chip Production

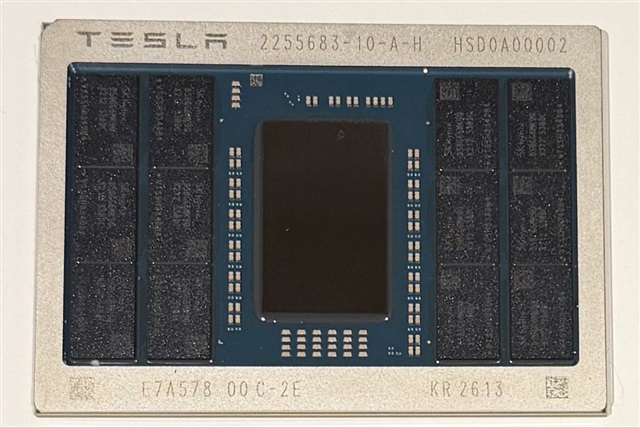

Tesla has announced a significant milestone in the development of its dedicated artificial intelligence chip, the AI5: reaching "tape-out." This term, fundamental in the semiconductor design industry, indicates that the chip's design has been completed and validated, and is now ready to be sent to the foundry for physical production. This is a critical phase that marks the transition from conceptual design to the concrete realization of hardware.

The announcement, shared by sources close to the company such as @elonmusk, underscores Tesla's commitment to strengthening its internal capabilities for processing AI workloads. The development of proprietary silicio is a strategy increasingly adopted by large technology companies to optimize performance, reduce long-term operational costs, and ensure tighter control over the entire technology pipeline—crucial aspects for those evaluating on-premise deployments of Large Language Models (LLMs).

Technical Details and Memory Implications

One detail that has emerged from early samples of the AI5 chip is the integration of SK Hynix memory. This choice is not accidental and has direct implications for the processor's performance and efficiency. Memory is a vital component for AI workloads, particularly for LLMs, where the amount of VRAM and its bandwidth determine the ability to handle large models and process high token throughput.

The selection of a specific supplier like SK Hynix suggests a targeted strategy to balance performance, cost, and supply chain availability. For companies considering self-hosted AI infrastructure deployments, the choice of memory and underlying silicio is a decisive factor for the Total Cost of Ownership (TCO) and the ability to scale operations while maintaining data sovereignty and regulatory compliance.

Context and Outlook for AI Deployments

The development of proprietary AI chips like Tesla's AI5 is part of a broader trend where companies seek hardware solutions optimized for their specific needs. This approach contrasts with exclusive reliance on general-purpose GPUs, potentially offering advantages in terms of energy efficiency, latency, and costs for specific AI workloads. For CTOs and infrastructure architects, the availability of specialized silicio opens new opportunities to optimize on-premise deployments.

The ability to control hardware at the chip level allows organizations to create air-gapped or highly secure environments, essential for sectors with stringent privacy and data sovereignty requirements. However, chip design and production are complex and costly processes, requiring significant investment and specialized expertise, representing a trade-off compared to the flexibility and availability of cloud solutions.

AI-RADAR's Role and Strategic Decisions

The evolution of AI hardware, as demonstrated by Tesla's AI5 chip, makes the evaluation of deployment options even more critical for businesses. The choice between cloud infrastructures and self-hosted solutions, or a hybrid approach, depends on a multitude of factors, including TCO, performance requirements, security constraints, and data sovereignty.

AI-RADAR aims to provide analytical frameworks and technical insights to support decision-makers in this process. Understanding hardware specifications, supply chain implications, and the trade-offs between different architectures is fundamental for building resilient and high-performing AI infrastructures. For those evaluating on-premise deployments, AI-RADAR offers resources and analysis on /llm-onpremise to delve deeper into these aspects and make informed decisions.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!