Tesla AI5: A New Chapter for AI Computing

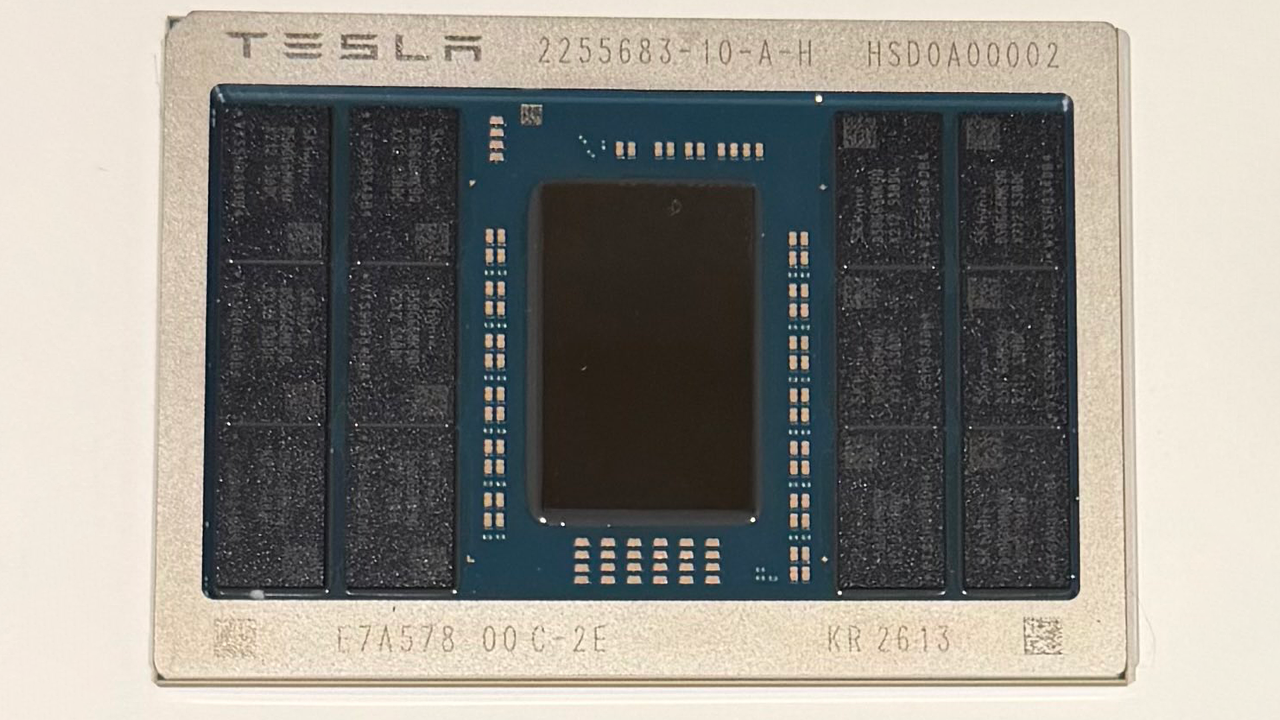

Elon Musk recently unveiled the first sample of the Tesla AI5 processor, a chip that promises to redefine the company's computing capabilities in artificial intelligence. During the presentation, Musk announced a staggering performance increase, up to 40 times compared to its predecessor. The event was also marked by a small slip of the tongue, with the Tesla CEO thanking "TSC" instead of "TSMC," the Taiwanese semiconductor manufacturing giant.

This announcement underscores Tesla's commitment to developing proprietary silicio, a strategy aimed at optimizing hardware for its specific AI needs, particularly for autonomous driving systems and the training and Inference workloads of Large Language Models. The ability to design and, potentially, produce chips in-house offers unprecedented control over the entire technological pipeline, from design to software integration.

Technical and Performance Implications

A 40x performance leap represents a significant achievement in the semiconductor industry. For AI workloads, this translates into greater efficiency in training complex models and reduced latency for Inference operations. The ability to process more Tokens per second or handle larger batch sizes is crucial for applications requiring real-time responses and for optimizing overall Throughput.

For organizations evaluating on-premise LLM deployment, the emergence of highly specialized processors like the Tesla AI5 offers new opportunities. These chips can drastically reduce VRAM requirements per model or enable the execution of larger, more complex models locally, addressing challenges related to scalability and operational costs. The choice of dedicated hardware is often a key factor in achieving a competitive TCO compared to cloud-based solutions.

Control, Sovereignty, and On-Premise Deployment

The development of proprietary silicio by companies like Tesla reflects a broader trend in the tech industry: the pursuit of greater control over the entire hardware and software stack. This strategy is particularly relevant for companies handling sensitive data or operating in environments with stringent compliance and data sovereignty requirements. The deployment of self-hosted AI infrastructures, perhaps in air-gapped environments, becomes more feasible and performant with optimized hardware.

The availability of a chip with such high performance can profoundly influence deployment decisions. Instead of relying on general-purpose GPUs or cloud services, companies can consider investing in bare metal infrastructures equipped with specialized silicio, gaining advantages in terms of security, customization, and, in the long term, TCO. For those evaluating on-premise deployment, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between initial and operational costs and the benefits in terms of control and performance.

Future Prospects for the AI Ecosystem

The introduction of processors like the Tesla AI5 marks a significant evolution in the landscape of artificial intelligence hardware. As the race to develop LLMs continues, the availability of increasingly powerful and efficient silicio is fundamental to unlocking new capabilities and making AI more accessible and performant, even in local deployment contexts.

These advancements push companies to reconsider their infrastructural strategies, balancing the flexibility of the cloud with the advantages of control, security, and cost optimization offered by self-hosted solutions. The choice of the right hardware and the correct deployment architecture will remain a critical factor for the success of enterprise AI initiatives.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!