Growing Bans on AI Data Centers in the United States

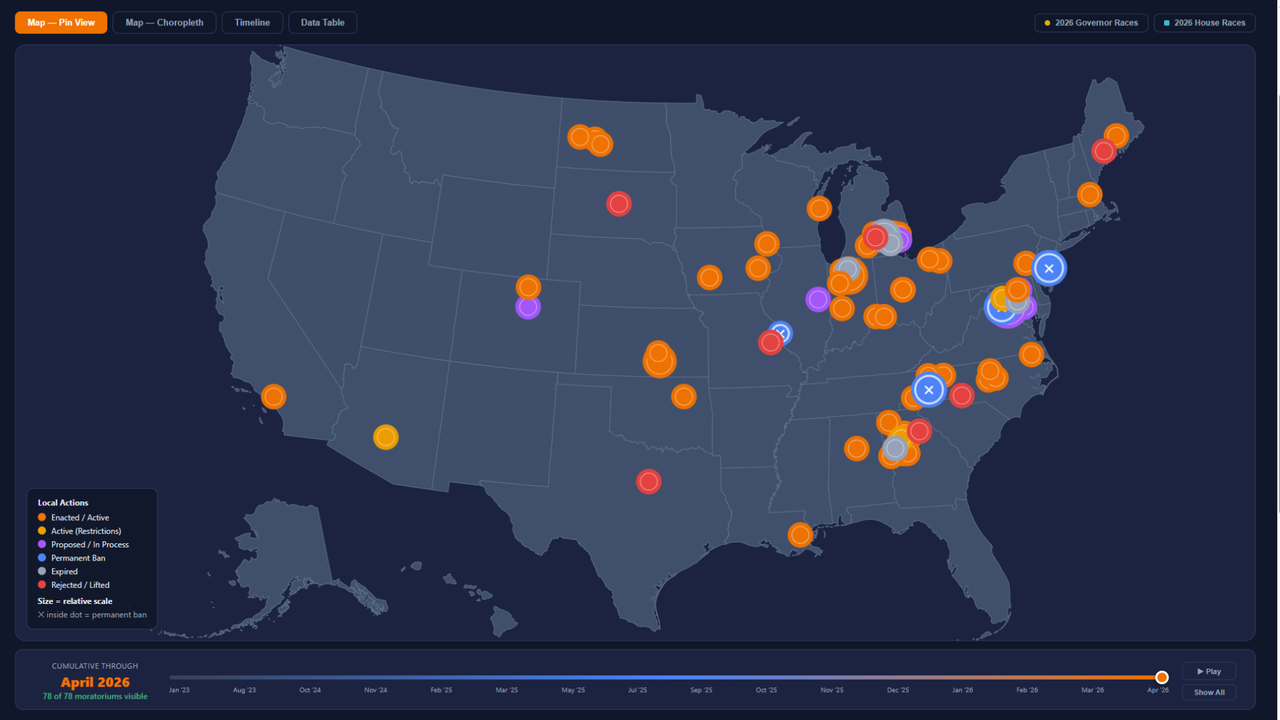

The infrastructural landscape for artificial intelligence in the United States is facing a new and significant challenge: a wave of moratoriums and bans on the construction of new data centers. According to recent tracking, a remarkable 69 local jurisdictions have imposed temporary or permanent blocks on these new constructions. Of these, four measures have been made permanent, signaling growing community resistance.

This trend reflects an increasingly widespread concern regarding the impact that modern data centers, particularly those optimized for AI workloads, have on local resources. Key issues include energy consumption, water usage for cooling, and noise impact, all factors that are prompting communities to reconsider the expansion of such infrastructure within their territories.

The Challenges of Artificial Intelligence Data Centers

AI-dedicated data centers differ from traditional facilities due to several key characteristics that amplify their impact. They require significantly higher power density to fuel arrays of high-performance GPUs, which are essential for training and Inference of Large Language Models (LLM) and other complex models. This high energy density translates into greater cooling needs, often through liquid or air systems that consume substantial amounts of water and energy.

The infrastructure required to support these workloads, including servers with high VRAM and high-speed networking systems, poses considerable challenges in terms of planning and management. Companies evaluating on-premise deployment for their AI workloads must consider not only initial (CapEx) and operational (OpEx) costs, but also the availability of power, water, and land, in addition to local regulations that can vary drastically.

Implications for On-Premise Deployment and Data Sovereignty

The rise in bans on AI data centers has direct implications for organizations considering on-premise or hybrid deployment strategies. The difficulty in finding suitable sites and regulatory uncertainty can increase the Total Cost of Ownership (TCO) and prolong implementation times. For companies with stringent data sovereignty, compliance, or air-gapped environment requirements, on-premise deployment often remains the only viable option.

However, these new restrictions underscore the need for even more meticulous infrastructural planning. Site selection, negotiation with local authorities, and the design of energy-efficient systems become critical factors. AI-RADAR offers analytical frameworks on /llm-onpremise to help companies evaluate these complex trade-offs, considering aspects such as scalability, latency, and security requirements in a context of increasing environmental and regulatory scrutiny.

Future Outlook and Decision Trade-offs

The proliferation of AI data center bans indicates a turning point in the industry. Communities are becoming more aware and reactive to the ecological and infrastructural footprint of artificial intelligence. This scenario prompts companies to reconsider their deployment strategies, balancing the need for computational power with sustainability and regulatory compliance.

While the cloud offers seemingly limitless flexibility and scalability, control over data and long-term TCO optimization often favor self-hosted solutions. The challenge for the future will be to find a balance between technological innovation and environmental and social responsibility, ensuring that the expansion of AI infrastructure occurs sustainably and is acceptable to local communities.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!