ASML Raises 2026 Revenue Guidance: AI Demand Strains Chip Supply Chain

ASML, a pivotal player in the global semiconductor supply chain, has announced a significant increase in its revenue forecasts for 2026. This upward revision is a clear indication of the growing and relentless demand for chips, a phenomenon largely driven by the explosion of artificial intelligence and, in particular, the development and deployment of Large Language Models (LLMs).

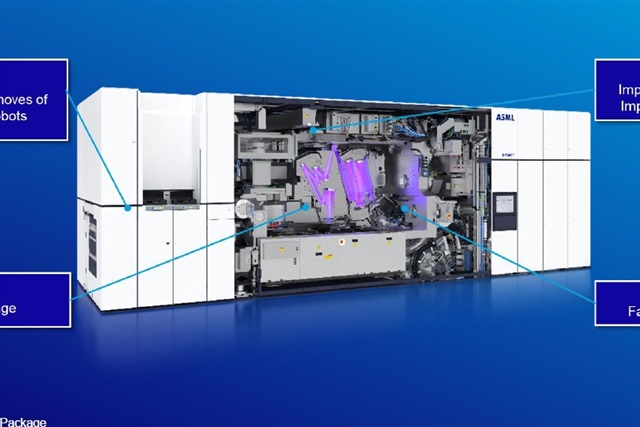

As a leader in advanced lithography systems, the Dutch company is at the heart of an ecosystem where every increase in high-performance chip production directly depends on its technologies. The decision to raise guidance reflects not only confidence in its market position but also an awareness that the current supply of semiconductors is struggling to keep pace with a demand that its own customers, the major chip manufacturers, are acutely experiencing.

The Pressure of AI Demand on the Semiconductor Supply Chain

Artificial intelligence, and particularly workloads related to LLMs, demands unprecedented computational power. This translates into a massive requirement for GPUs and specialized AI accelerators, characterized by high amounts of VRAM and parallel processing capabilities. The production of these advanced chips relies on extremely complex lithographic processes, where ASML's machines play an irreplaceable role.

Demand for these components is not limited to large-scale model training but also extends to inference, which requires efficient hardware to handle millions of queries in real-time. This pressure propagates throughout the entire supply chain, from wafer manufacturers to equipment suppliers, creating bottlenecks and extended lead times that impact the entire technology sector.

Implications for On-Premise Deployments and TCO

For organizations evaluating on-premise LLM deployments, the current chip market situation has direct and significant implications. The scarcity of cutting-edge AI hardware, such as high-memory GPUs, can lead to prolonged waiting times and significantly higher acquisition costs (CapEx). This directly impacts the Total Cost of Ownership (TCO) of a self-hosted solution, making infrastructure planning even more complex.

The choice between a cloud and an on-premise infrastructure, already rich with trade-offs related to data sovereignty, compliance, and control, becomes even more critical in a context of limited supply. Companies must balance the need for control and security with hardware availability and cost, carefully considering amortization periods and future scalability. For those evaluating on-premise deployments, complex trade-offs exist, which AI-RADAR analyzes in detail through its analytical frameworks available at /llm-onpremise.

A Look at the Future of the AI Supply Chain

ASML's increased forecast is a barometer of the industry's confidence in the continued expansion of AI, but it also underscores the fragility of a global supply chain under stress. The ability to meet future demand for AI chips will depend not only on technological innovation but also on the capacity to scale up production, a process that requires massive investments and years to realize.

Strategic decisions made today by chip manufacturers and their suppliers like ASML will determine the speed and efficiency with which companies can implement their AI strategies in the coming years. Proactive supply chain management and diversification of deployment options will become critical factors for the success of initiatives based on LLMs and other artificial intelligence applications.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!