Google's Distinctive Approach to AI Hardware

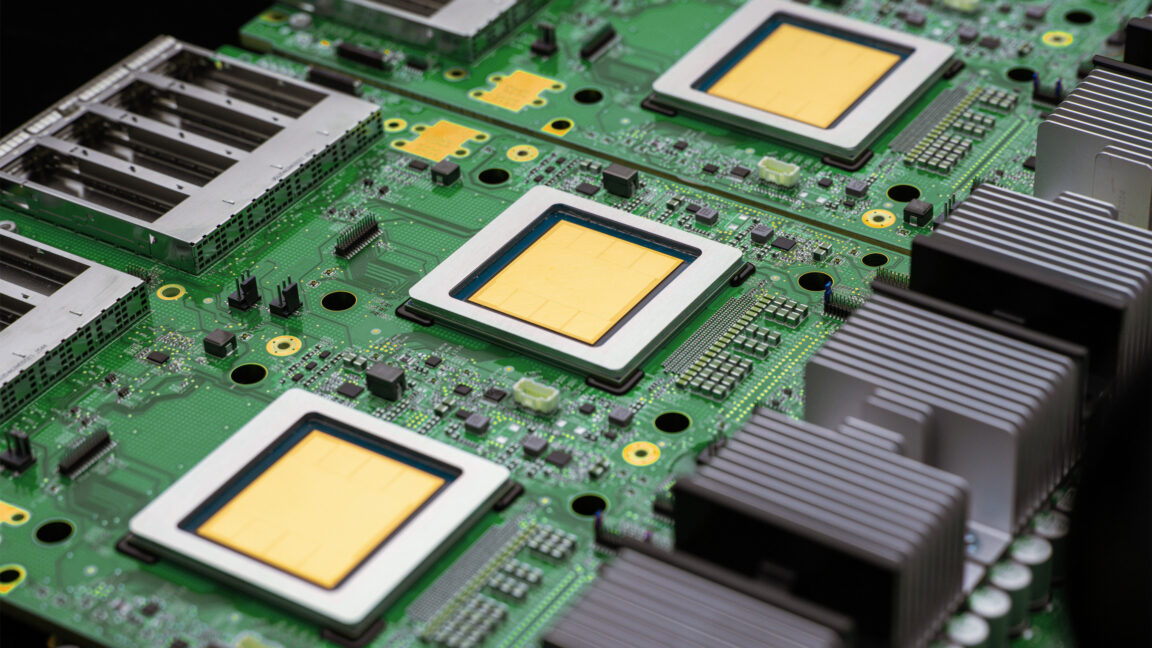

While most companies committed to building AI models are rapidly acquiring every available Nvidia AI accelerator, Google has taken a different path. Its cloud AI infrastructure primarily relies on its line of custom Tensor Processing Units (TPUs). After announcing the seventh-generation TPU, named Ironwood, in 2025, the company has now unveiled its eighth generation. However, this is not merely a faster iteration of the previous chip but a targeted evolution.

This strategy underscores Google's commitment to developing proprietary silicio, a factor that grants it deeper control over hardware optimization for its specific workloads. The ability to design custom chips offers significant advantages in terms of energy efficiency and performance, crucial aspects for managing large-scale AI infrastructures.

TPU 8t and TPU 8i: Specialization for the Agentic Era

The new eighth-generation TPUs come in two distinct variants, providing Google and its customers with an AI platform that the company describes as faster and more efficient. Google is promoting the idea that the "agentic era" represents a fundamental shift from previous AI systems, necessitating a new approach to hardware. For this reason, engineers have devised the TPU 8t, optimized for training, and the TPU 8i, dedicated to inference.

The TPU 8t was specifically designed for the training phase of AI models, aiming to reduce the time required for frontier AI models from months to weeks. This is a critical aspect, as training Large Language Models (LLM) and other cutting-edge models demands immense computational resources and prolonged periods. Hardware specialization for training accelerates the iteration and development of new models. On the other hand, the TPU 8i focuses on inference, which is the execution of trained models to generate responses or analyze data, where low latency and high throughput are priorities.

Infrastructure and TCO Implications

Although Google's TPUs are primarily intended for the company's cloud infrastructure, the principles guiding their design have significant implications for those evaluating on-premise deployments. The distinction between hardware optimized for training and hardware for inference is fundamental to any AI infrastructure strategy. VRAM requirements, memory bandwidth, and computational power differ significantly between the two phases, directly impacting the Total Cost of Ownership (TCO) and operational efficiency.

For organizations considering self-hosted solutions, the choice of specific accelerators for training or inference can determine scalability, energy costs, and the ability to handle diverse workloads. Hardware optimization, like that pursued by Google with its TPUs, is a key factor in maximizing return on investment and ensuring data sovereignty, especially in air-gapped environments or those with stringent compliance requirements. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these trade-offs and support deployment decisions.

Future Prospects in the Agentic AI Era

The introduction of these new TPUs marks an important step in the evolution of AI hardware, particularly for the emerging "agentic era." In this paradigm, AI systems are not limited to responding to single queries but act autonomously, planning and executing sequences of actions to achieve complex goals. This requires not only more powerful models but also a hardware infrastructure capable of supporting more dynamic and interactive processing cycles.

Google's continuous investment in custom silicio, as demonstrated by the eighth-generation TPUs, reflects a long-term vision where hardware is co-designed with software to unlock new artificial intelligence capabilities. This trend towards hardware specialization is set to continue, offering new opportunities and challenges for CTOs and infrastructure architects who must balance performance, cost, and control in their AI deployments.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!