Google ridefinisce l'architettura dei TPU con un approccio duale

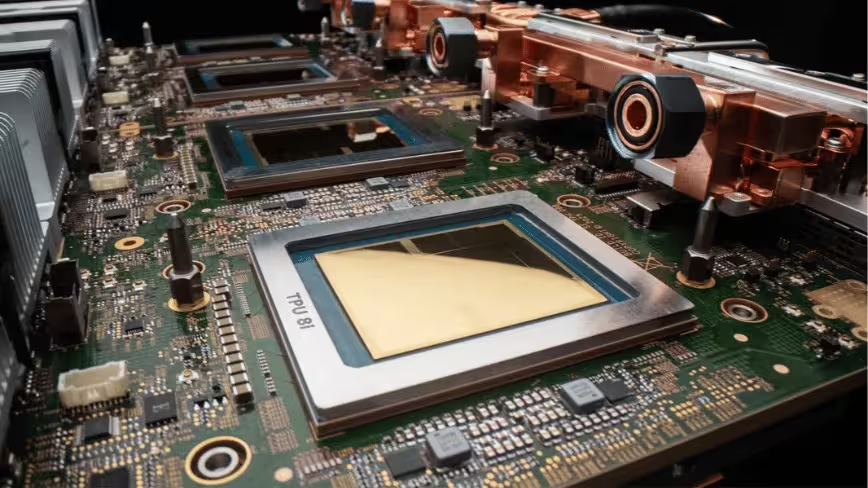

Google ha recentemente annunciato un'evoluzione significativa nella sua strategia per i Tensor Processing Units (TPU), le unità di elaborazione dedicate all'intelligenza artificiale. Durante l'evento Cloud Next 2026, l'azienda ha reso disponibile a livello generale la sua settima generazione di TPU, denominata Ironwood, e ha contemporaneamente offerto un'anteprima della sua architettura di ottava generazione. Quest'ultima introduce una distinzione fondamentale: due chip separati, il TPU 8t (Sunfish) per il training dei modelli e il TPU 8i (Zebrafish) per l'inference.

Questa mossa segna un punto di svolta nella filosofia di design dei chip AI, evidenziando una crescente specializzazione per ottimizzare le prestazioni in base al carico di lavoro specifico. La settima generazione, Ironwood, dimostra già capacità notevoli, raggiungendo 4.6 petaFLOPS per singolo chip e scalando fino a 42.5 exaFLOPS in un superpod composto da 9.216 chip. Tali numeri sottolineano l'impegno di Google nel fornire potenza di calcolo elevata per le esigenze più complesse dell'AI.

Specializzazione hardware: training e inference su chip dedicati

L'introduzione dei TPU 8t e 8i rappresenta una chiara direzione verso la specializzazione hardware. Il TPU 8t, progettato da Broadcom, sarà ottimizzato per le intense e prolungate operazioni di training dei Large Language Models (LLM) e di altri modelli di AI. Queste attività richiedono un'elevata capacità di calcolo in floating point e una gestione efficiente della memoria per dataset di grandi dimensioni. Al contrario, il TPU 8i, sviluppato da MediaTek, si concentrerà sull'inference, ovvero l'esecuzione dei modelli addestrati per generare previsioni o risposte. I carichi di lavoro di inference spesso beneficiano di un'elevata throughput e bassa latenza, con un'attenzione particolare all'efficienza energetica e alla capacità di gestire batch size variabili.

Entrambi i chip di ottava generazione mirano a sfruttare il processo produttivo a 2nm di TSMC, una tecnicia all'avanguardia che promette densità di transistor superiori e miglioramenti in termini di efficienza energetica e prestazioni. La disponibilità di queste nuove architetture è prevista per la fine del 2027, suggerendo un orizzonte temporale in cui le aziende potranno valutare l'integrazione di queste soluzioni nelle loro infrastrutture.

Implicazioni per i deployment on-premise e la sovranità dei dati

La differenziazione tra chip per training e inference ha implicazioni significative per le aziende che considerano deployment on-premise o soluzioni ibride. La scelta di hardware dedicato permette un'ottimizzazione più precisa delle risorse, riducendo potenzialmente il Total Cost of Ownership (TCO) per carichi di lavoro specifici. Ad esempio, un'azienda che esegue principalmente inference potrebbe optare per un numero maggiore di TPU 8i, ottimizzando i costi operativi e l'efficienza energetica rispetto a un'architettura più generica.

Per le organizzazioni con stringenti requisiti di sovranità dei dati, compliance o che operano in ambienti air-gapped, la disponibilità di hardware specializzato offre maggiore flessibilità nella progettazione di stack locali. La possibilità di scegliere componenti specifici per le proprie esigenze di training o inference può facilitare la creazione di infrastrutture AI robuste e controllate, riducendo la dipendenza da servizi cloud esterni per le operazioni più sensibili. AI-RADAR offre framework analitici su /llm-onpremise per valutare i trade-off tra diverse strategie di deployment.

Il futuro della guerra dei chip AI: una questione di filosofia di design

La decisione di Google di scindere le sue architetture TPU in unità dedicate per training e inference non è solo una mossa tecnica, ma riflette una più ampia "guerra di filosofia di design" nel settore dei chip AI. Mentre alcuni attori del mercato puntano su architetture più general-purpose o su soluzioni che cercano di bilanciare entrambi i carichi di lavoro, Google sembra scommettere sulla specializzazione estrema per massimizzare l'efficienza e le prestazioni in scenari d'uso specifici.

Questa strategia potrebbe portare a soluzioni più performanti e costi-efficaci per determinate applicazioni, ma potrebbe anche introdurre complessità nella gestione dell'infrastruttura per chi necessita di flessibilità tra training e inference sullo stesso hardware. Il mercato dei chip AI continua a evolversi rapidamente, e l'approccio di Google con i TPU 8t e 8i sarà un fattore chiave da osservare per comprendere le future direzioni dell'innovazione hardware nel campo dell'intelligenza artificiale.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!