Google specializza i chip TPU per training e inference AI

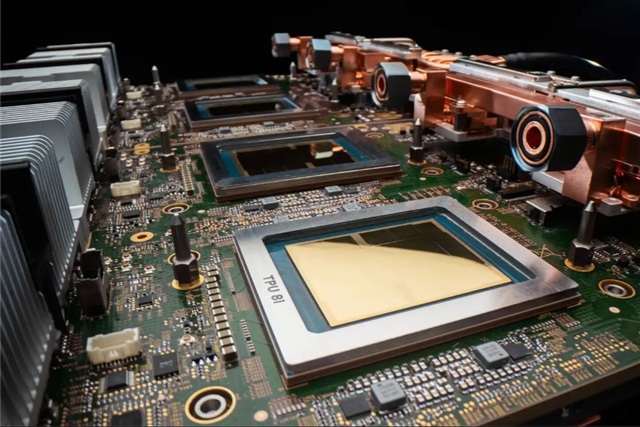

Google ha annunciato una significativa evoluzione nella sua strategia per l'intelligenza artificiale, introducendo una chiara distinzione nei suoi chip Tensor Processing Unit (TPU). L'azienda sta ora sviluppando versioni specializzate di questi acceleratori, ottimizzate rispettivamente per le fasi di training e di inference dei Large Language Models (LLM) e di altri carichi di lavoro AI. Questa mossa sottolinea una tendenza crescente nel settore verso infrastrutture AI sempre più mirate, progettate per massimizzare l'efficienza e le performance in base alle specifiche esigenze computazionali.

La decisione di Google riflette una comprensione approfondita delle diverse richieste che training e inference impongono all'hardware. Mentre in passato si tendeva a utilizzare architetture più generaliste, la complessità e la scala degli attuali LLM rendono la specializzazione una leva fondamentale per ottimizzare risorse e costi. Questo approccio ha implicazioni dirette per le aziende che valutano strategie di deployment AI, sia in cloud che on-premise, influenzando il Total Cost of Ownership (TCO) e le capacità operative.

Le differenze tra training e inference e i requisiti hardware

La distinzione tra training e inference non è puramente concettuale, ma si traduce in requisiti hardware profondamente diversi. Il training dei modelli AI, in particolare degli LLM, è un processo estremamente intensivo che richiede una capacità di calcolo massiccia, elevata larghezza di banda della memoria (VRAM) e la capacità di gestire operazioni in virgola mobile a precisione più elevata (come FP16 o BF16). L'obiettivo principale è il throughput, ovvero la capacità di elaborare enormi quantità di dati nel minor tempo possibile per addestrare il modello. Questo spesso implica architetture distribuite con centinaia o migliaia di acceleratori che lavorano in parallelo.

L'inference, d'altra parte, riguarda l'applicazione di un modello già addestrato per generare previsioni o risposte. Qui, la priorità si sposta spesso sulla bassa latenza e sull'efficienza energetica per singola richiesta. Sebbene la capacità di calcolo sia ancora importante, l'inference può beneficiare di precisioni inferiori (come INT8 o anche INT4 tramite Quantization) per ridurre i requisiti di memoria e aumentare il throughput per batch di piccole dimensioni. La VRAM necessaria può variare notevolmente a seconda della dimensione del modello e della finestra di contesto, ma l'ottimizzazione per l'inference mira a servire il maggior numero possibile di richieste con il minor consumo di risorse.

Implicazioni per il deployment on-premise e il TCO

La specializzazione dell'hardware AI ha un impatto diretto sulle decisioni di deployment per le aziende. Per chi valuta soluzioni on-premise, la scelta tra chip ottimizzati per training o inference diventa cruciale per la gestione del TCO. L'acquisto di hardware per il training, estremamente costoso, potrebbe essere giustificato solo per carichi di lavoro continui o per la necessità di mantenere la sovranità dei dati su set di dati sensibili. Tuttavia, una volta completato il training iniziale, queste risorse potrebbero rimanere sottoutilizzate.

Al contrario, l'hardware ottimizzato per l'inference può offrire un migliore rapporto costo-efficacia per carichi di lavoro di produzione, dove la scalabilità e la latenza sono fattori critici. Le aziende che desiderano mantenere il controllo completo sui propri dati e modelli, operando in ambienti air-gapped o con stringenti requisiti di compliance, troveranno nella specializzazione hardware un'opportunità per costruire infrastrutture self-hosted più efficienti. La capacità di dimensionare con precisione le risorse per l'inference, riducendo il consumo energetico e massimizzando il throughput per token, è un fattore chiave per ottimizzare i costi operativi a lungo termine.

Il futuro dell'infrastruttura AI specializzata

La mossa di Google con i suoi TPU riflette una tendenza più ampia nel settore tecnicico: la crescente frammentazione e specializzazione dell'hardware per l'AI. Altri attori stanno esplorando soluzioni simili, dai chip edge per l'inference a basso consumo energetico fino ad acceleratori specifici per carichi di lavoro di machine learning particolari. Questa evoluzione pone nuove sfide e opportunità per CTO, DevOps lead e architetti di infrastrutture.

La necessità di bilanciare performance, costi, flessibilità e requisiti di sovranità dei dati diventa sempre più complessa. La scelta dell'hardware giusto non è più una questione di “one-size-fits-all”, ma richiede un'analisi dettagliata dei carichi di lavoro previsti, dei modelli da deployare e degli obiettivi di business. L'adozione di un'infrastruttura AI specializzata, sia essa on-premise o ibrida, richiederà una pianificazione attenta e una profonda comprensione dei trade-off tecnicici ed economici per garantire il successo a lungo termine.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!