The Emergence of Memory Bottlenecks in AI Infrastructure

The exponential expansion of artificial intelligence workloads, particularly Large Language Model (LLM) inference, is placing significant pressure on data center infrastructures. A key observation from a Micron Senior Vice President underscores how memory bottlenecks are emerging as a significant threat to GPU efficiency in these environments. This issue is not new, but its relevance grows proportionally with the complexity and size of AI models.

GPU memory capacity and bandwidth have become critical performance factors. While the computational power of graphics processors continues to evolve rapidly, the speed at which data can be transferred to and from memory can limit overall throughput, preventing processing units from operating at their full capacity. This scenario has direct implications for companies seeking to scale their AI capabilities, whether in the cloud or in self-hosted environments.

The Critical Role of VRAM in LLM Inference

For Large Language Models, GPU VRAM (Video RAM) is not just a resource but a fundamental constraint. Models like Llama 3 or Mixtral can require tens or hundreds of gigabytes of memory to be loaded and operate effectively, especially when handling large context windows or high inference batch sizes. The ability of a single GPU to host an entire model or a significant portion of it determines the need for techniques such as tensor parallelism or pipeline parallelism, which in turn introduce communication overhead.

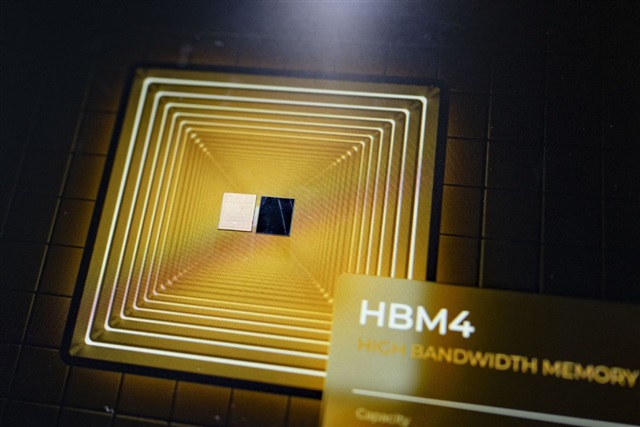

Beyond capacity, memory bandwidth is crucial. LLM inference is often "memory-bound," meaning that the speed at which tokens are generated depends more on the rapid access to model weights and embeddings than on pure computational power. GPUs with HBM (High Bandwidth Memory) offer a significant advantage in this context, but their cost and availability can be a barrier for many on-premise deployments.

Implications for On-Premise Deployments and TCO

For CTOs, DevOps leads, and infrastructure architects evaluating self-hosted solutions for AI workloads, memory bottlenecks directly impact the Total Cost of Ownership (TCO). Investing in GPUs with higher VRAM and bandwidth may entail a higher initial CapEx, but it can reduce long-term OpEx through greater efficiency, fewer servers required, and optimized power consumption for a given throughput. The choice between GPUs with 40GB, 80GB, or more VRAM becomes a strategic decision that balances performance, cost, and scalability.

Data sovereignty and compliance requirements often push organizations towards on-premise or air-gapped deployments. In these scenarios, efficient management of hardware resources, including GPU memory, is essential to ensure that AI models can operate performantly and securely within the local infrastructure. For those evaluating on-premise deployments, complex trade-offs exist between initial costs, performance, and scalability. AI-RADAR offers analytical frameworks on /llm-onpremise to support these evaluations, providing tools to analyze the constraints and opportunities of different configurations.

Future Prospects and Mitigation Strategies

Addressing memory bottlenecks requires a multi-faceted approach. On the hardware front, continuous innovation in memory technologies and interconnection architectures (such as NVLink or CXL) aims to overcome these limitations. On the software front, techniques like quantization (e.g., from FP16 to INT8 or INT4) and model pruning can significantly reduce memory footprint and bandwidth requirements while maintaining an acceptable level of accuracy.

Framework developers and machine learning engineers are constantly seeking more efficient algorithms for memory management and inference optimization. An organization's ability to implement these strategies, combined with careful infrastructural planning, will be crucial to unlocking the full potential of AI at scale, ensuring that GPU efficiency is not compromised by memory limitations.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!