Behind the Scenes of Task Manager: The Truth About CPU Usage

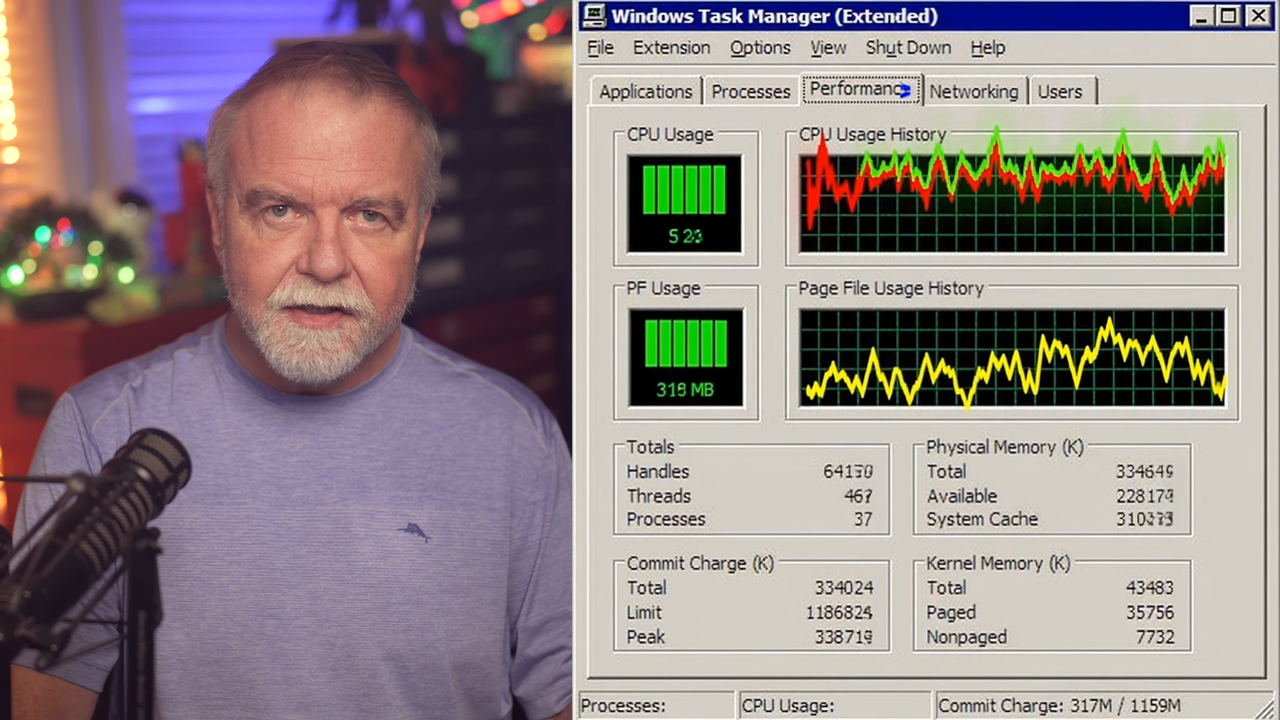

Windows Task Manager is one of the most familiar tools for millions of users and IT professionals, a go-to for monitoring system performance and managing processes. However, its representation of CPU utilization has often raised questions and even frustration. Dave Plummer, the Microsoft engineer who conceived and developed the original version of Task Manager, recently offered a detailed explanation as to why the CPU utilization metric can sometimes appear "deceptive" or not align with user expectations. His analysis illuminates the intrinsic complexity of a task that, at first glance, seems trivial.

Plummer highlighted how measuring processor activity is not a simple direct reading, but rather the result of a sophisticated interpretation of clock cycles and processor states. This revelation offers a crucial perspective for anyone involved in infrastructure management, emphasizing the importance of understanding the methodologies underlying monitoring tools.

The Hidden Complexity of CPU Measurement

Measuring CPU utilization in a modern operating system is an exercise in balancing precision and readability. Contemporary CPUs are not simple monolithic computing units; they are complex architectures with multiple cores, logical threads (like Intel's Hyper-Threading), various operating modes (user mode, kernel mode), and power-saving states. A single percentage value for "CPU utilization" must synthesize all these dynamics.

The main challenge lies in distinguishing between the time the CPU is actively engaged in useful computations and the time it is waiting for data or instructions, or simply in an idle state. Task Manager's algorithms, as explained by Plummer, must constantly sample processor activity, calculating the percentage of time cores have been active relative to the total time. This sampling can lead to rapid fluctuations and values that do not always reflect the human perception of "load," especially with bursty workloads or processes that rapidly alternate between active and inactive states.

Implications for AI Workloads and On-Premise Deployments

For professionals managing complex infrastructures, particularly those dedicated to artificial intelligence and Large Language Models workloads, a deep understanding of system metrics is fundamental. Even though LLM inference and training are often accelerated by high-performance GPUs, the CPU still plays a critical role in the overall pipeline. It handles data pre-processing, container orchestration, memory management, and communication between various hardware components.

Misinterpreting CPU utilization can lead to suboptimal decisions in on-premise deployment. For example, an apparently low CPU utilization value might mask bottlenecks due to I/O latency or operating system inefficiencies, while a sudden peak might be a transient event and not an indicator of constant overload. For those evaluating self-hosted deployments, understanding these nuances is essential for optimizing TCO, ensuring data sovereignty, and maximizing throughput without excessive investment in unnecessary hardware. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these trade-offs in a structured manner.

Transparency and Infrastructure Optimization

Dave Plummer's explanation is not just a historical anecdote but a reminder of the intrinsic complexity hidden behind seemingly simple user interfaces. For CTOs, DevOps leads, and infrastructure architects, this underscores the importance of looking beyond superficial metrics and delving into the workings of monitoring tools.

The ability to correctly interpret CPU utilization data, considering sampling methodologies and underlying hardware architectures, is crucial for capacity planning, troubleshooting, and resource optimization. In an era where AI workloads demand extreme performance and granular control over hardware, a clear understanding of how operating systems measure and report processor activity becomes a strategic asset for ensuring the efficiency and reliability of self-hosted infrastructures.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!