Monitoraggio CPU: L'Eredità di Task Manager e le Sfide On-Premise

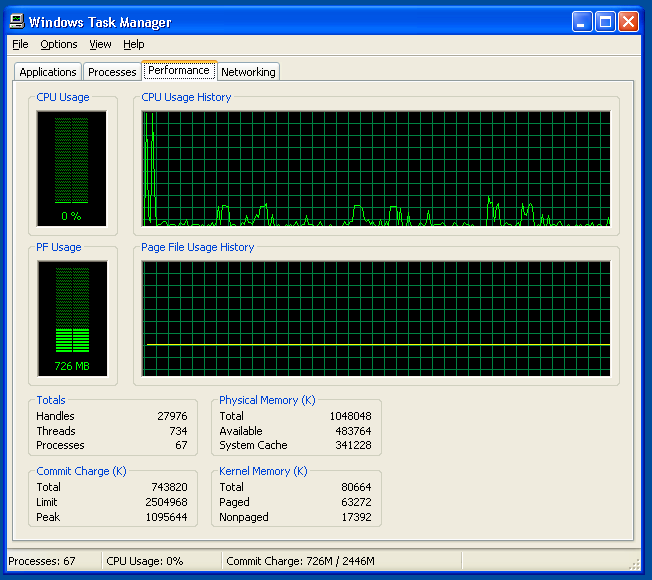

Il Task Manager di Windows, con il suo iconico misuratore di utilizzo della CPU, ha rappresentato per anni una finestra immediata sulle prestazioni di un sistema. Come rivelato dall'ingegnere che lo ha sviluppato, la sua logica non si basava su valori “magici”, ma su un meccanismo relativamente semplice: un timer e una serie di chiamate al kernel. Questa semplicità, seppur efficace per il contesto dell'epoca, contrasta nettamente con la complessità richiesta dal monitoraggio delle risorse nei moderni ambienti IT, specialmente quelli dedicati ai Large Language Models (LLM) e ai carichi di lavoro di intelligenza artificiale.

Oggi, la comprensione dell'utilizzo della CPU è solo una piccola parte di un framework molto più ampio. Per i professionisti che gestiscono infrastrutture complesse, la necessità di visibilità granulare sulle prestazioni hardware è diventata cruciale. Questo è particolarmente vero per i deployment on-premise di LLM, dove ogni componente, dalla VRAM delle GPU alla latenza di rete, incide direttamente sull'efficienza operativa e sul Total Cost of Ownership (TCO).

Dalle Chiamate Kernel al Monitoraggio Avanzato

Mentre il misuratore del Task Manager forniva un'indicazione generale dell'attività della CPU, i carichi di lavoro AI richiedono un livello di dettaglio incomparabilmente superiore. Non è sufficiente sapere che una CPU è occupata; è fondamentale comprendere come le risorse della GPU vengano utilizzate, inclusi parametri come l'occupazione della VRAM, il throughput di memoria e la frequenza di clock. Le operazioni di inference e training degli LLM sono spesso GPU-bound, rendendo le metriche della CPU meno critiche rispetto a quelle delle unità di elaborazione grafica.

Il monitoraggio avanzato si estende anche a fattori come la latenza p95 (il 95° percentile della latenza), la dimensione del batch (batch size) e i token per secondo (tokens/sec) che un modello può elaborare. Questi dati sono indispensabili per ottimizzare le pipeline di inference, bilanciare i carichi di lavoro e identificare colli di bottiglia. Strumenti moderni vanno ben oltre le semplici chiamate kernel, integrando API specifiche per hardware e framework, capaci di fornire telemetria in tempo reale su ogni aspetto delle prestazioni del sistema.

Implicazioni per i Deployment On-Premise

Per le organizzazioni che scelgono un approccio self-hosted per i loro LLM, la capacità di monitorare e gestire in modo proattivo l'hardware è un fattore distintivo. I deployment on-premise offrono vantaggi in termini di sovranità dei dati, compliance e controllo, ma richiedono anche una gestione infrastrutturale più approfondita. Comprendere l'esatto consumo di risorse permette di prendere decisioni informate sull'acquisto di nuovo silicio, sulla configurazione dei server bare metal e sull'ottimizzazione dell'allocazione delle risorse esistenti.

Un monitoraggio inefficace può portare a un sottoutilizzo delle costose GPU, a tempi di risposta elevati per le applicazioni utente o a un TCO inaspettatamente alto a causa di inefficienze energetiche. Al contrario, una visibilità completa consente di massimizzare l'investimento hardware, pianificare upgrade mirati e garantire che gli ambienti air-gapped mantengano prestazioni ottimali senza compromettere la sicurezza. Per chi valuta deployment on-premise, AI-RADAR offre framework analitici su /llm-onpremise per valutare trade-off specifici legati a queste scelte infrastrutturali.

La Prospettiva Futura del Controllo Hardware

Il percorso dal semplice misuratore di CPU del Task Manager ai complessi dashboard di monitoraggio odierni riflette l'evoluzione delle esigenze computazionali. Con l'avanzare degli LLM e di altre tecnicie AI, la richiesta di controllo e visibilità sull'hardware continuerà a crescere. Non si tratta più solo di “quanto è occupata la CPU”, ma di un'analisi multidimensionale che include la salute delle GPU, l'efficienza della memoria, la latenza della rete e il throughput complessivo del sistema.

Questa evoluzione sottolinea l'importanza di investire in soluzioni di monitoraggio robuste e specifiche per l'AI. Per CTO, DevOps lead e architetti di infrastruttura, la capacità di interpretare questi dati è fondamentale per mantenere la competitività, garantire la sovranità dei dati e ottimizzare le operazioni AI. Il futuro dei deployment on-premise per gli LLM dipenderà sempre più dalla capacità di trasformare i dati grezzi sulle prestazioni hardware in insight azionabili.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!