The Legacy of a Natural Language Pioneer

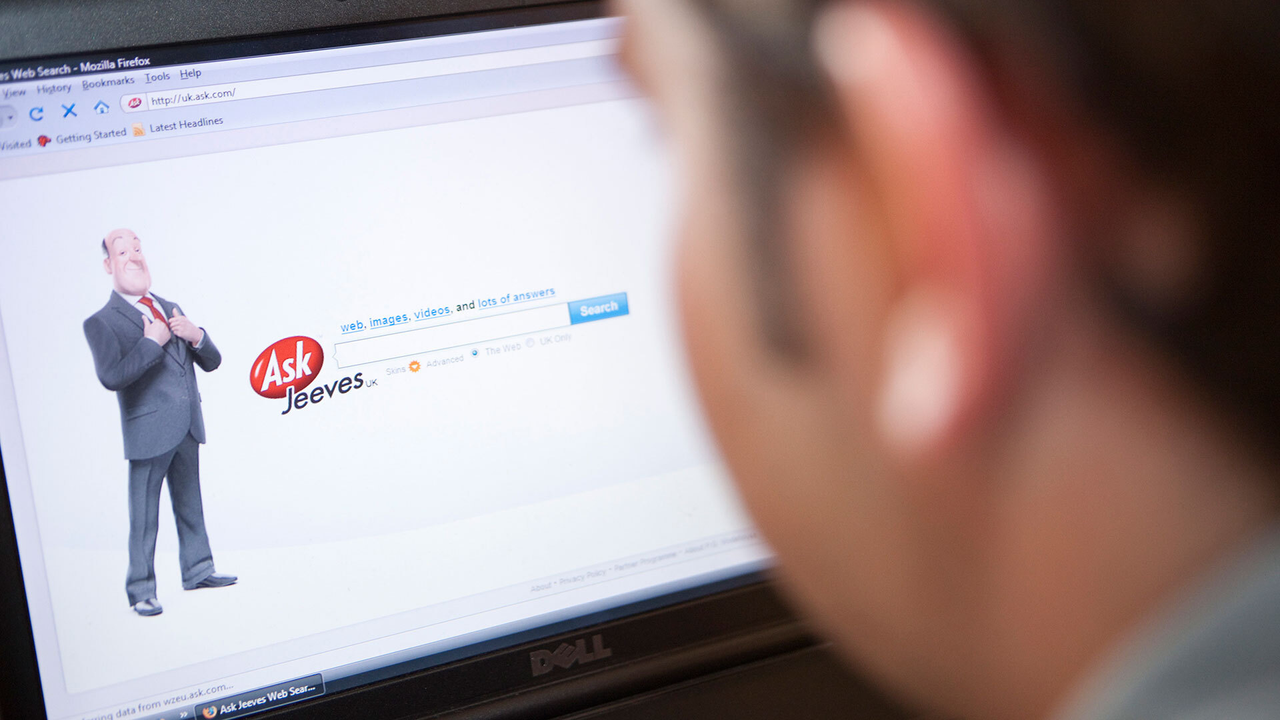

The digital landscape bids farewell to a piece of its history: Ask Jeeves, the search engine that defined the 90s, has officially ceased operations, leaving behind only a placeholder page. Known for its interface that allowed users to formulate questions in natural language, Ask Jeeves represented an early and significant attempt to make interaction with search systems more intuitive and human. Although its technology was based on predefined rules and patterns, the vision of a digital assistant capable of understanding human language anticipated by decades the capabilities we now associate with modern Large Language Models (LLMs).

The shutdown of the website by its parent company is not just the end of a brand, but also a moment to reflect on how far machine capabilities have progressed in processing and responding to human language. From Ask Jeeves' early and rudimentary implementations, the industry has made giant strides, driven by decades of research in computational linguistics and artificial intelligence.

From Symbolic Processing to Large Language Models

Ask Jeeves' approach to natural language processing was predominantly symbolic, based on grammars and ontologies to interpret user queries. This method, while innovative for its time, had inherent limitations in terms of scalability and flexibility, requiring intensive manual maintenance to adapt to new expressions or domains. Evolution then saw the emergence of statistical models and, more recently, the Transformer architectures that underpin current LLMs.

These modern models, trained on massive amounts of textual data, do not merely recognize patterns but learn complex representations of language, allowing for much more sophisticated text understanding and generation. Their ability to perform tasks such as summarization, translation, classification, and question answering in a contextually relevant manner has redefined expectations for human-machine interaction. However, the power of these LLMs brings new challenges, especially for companies considering an on-premise deployment.

The Challenges of On-Premise Deployment for LLMs

For CTOs, DevOps leads, and infrastructure architects, managing and deploying LLMs in self-hosted or air-gapped environments presents a series of critical considerations. Unlike simpler systems of the past, LLMs require significant computational resources, particularly for inference. The choice of hardware, such as GPUs with high VRAM specifications (e.g., A100 80GB or H100 SXM5), becomes fundamental to ensure adequate throughput and acceptable latencies. Model quantization and optimization of serving Frameworks are essential steps to maximize efficiency and reduce resource consumption.

Total Cost of Ownership (TCO) is a decisive factor. While the cloud offers flexibility, on-premise deployment can provide long-term economic advantages, in addition to ensuring greater data sovereignty and regulatory compliance, crucial aspects for regulated sectors such as finance or healthcare. Building a robust deployment pipeline, including strategies for fine-tuning and model updates, is an infrastructure investment that requires careful planning. For those evaluating on-premise deployment, AI-RADAR offers analytical frameworks on /llm-onpremise to assess the trade-offs between costs, performance, and control.

The Future of Natural Language and Infrastructure Control

Ask Jeeves' farewell reminds us of the long journey artificial intelligence has made in the field of natural language. From a pioneer that sought to interpret our questions with fixed rules, we have arrived at systems that generate complex texts and answer queries with surprising fluidity. This evolution is not only technological but also strategic, especially for companies that wish to maintain control over their data and infrastructure.

The decision to adopt a self-hosted approach for LLMs is not trivial and implies a deep understanding of hardware specifications, security needs, and TCO implications. As the memory of Ask Jeeves fades, the commitment to increasingly powerful and controllable artificial intelligence solutions remains a priority for those operating at the heart of modern technological infrastructure.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!