AI in Browsers: A New Interaction Paradigm

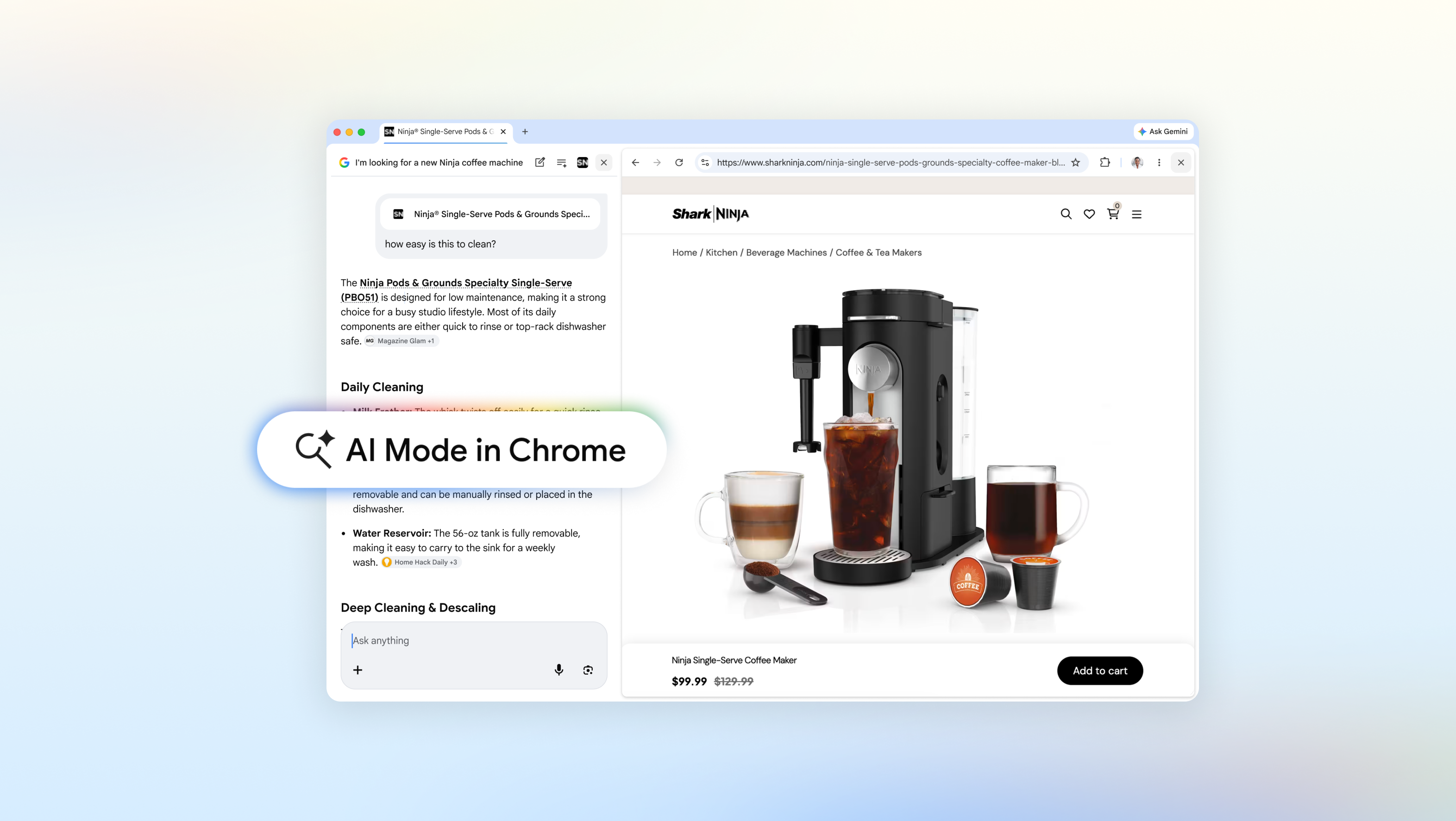

Recent developments in the web browser landscape, exemplified by updates to Chrome's "AI Mode," are redefining how users interact with online content. This integration of Large Language Model (LLM)-based capabilities promises to transform the browsing experience, offering new possibilities for exploration and interaction. However, behind these user-facing innovations lie complex architectural and infrastructural decisions that warrant in-depth analysis.

For businesses and IT professionals, the adoption of AI functionalities in consumer products raises fundamental questions. The central issue concerns the physical and logical location where data processing and model execution occur. Is it inference performed entirely in the cloud, on the client device (edge computing), or in a hybrid model? The answer has direct implications for aspects such as data sovereignty, latency, and Total Cost of Ownership (TCO).

Technical and Architectural Details for LLM Inference

Implementing advanced AI functionalities, particularly those leveraging LLMs, requires significant computational resources. Traditionally, inference for large LLMs has been relegated to scalable cloud infrastructures, equipped with high-performance GPUs like NVIDIA A100 or H100, featuring high VRAM to host models and ensure adequate throughput. This approach offers scalability and reduces the load on client devices but entails reliance on third parties and potential data privacy concerns.

An alternative, increasingly relevant for specific scenarios, is the execution of AI inference directly on the device or in self-hosted environments. This requires more compact models, often achieved through quantization techniques, and client hardware with sufficient computing capabilities. While on-premise or edge execution can improve latency and strengthen data sovereignty, it presents challenges in terms of management, model updates, and minimum hardware requirements. For companies considering integrating LLMs into internal applications or products with stringent compliance requirements, evaluating on-premise deployment is crucial.

Implications for Data Sovereignty and TCO

The choice between a cloud-based architecture and an on-premise or hybrid one directly impacts data sovereignty. When processing occurs on external servers, user data may be subject to different jurisdictions and privacy policies that do not always align with business needs or local regulations, such as GDPR. For highly regulated sectors, the ability to keep data within one's own infrastructural boundaries, perhaps in air-gapped environments, becomes a non-negotiable requirement.

Furthermore, Total Cost of Ownership (TCO) is a critical factor. While the cloud offers a flexible OpEx model, costs can rapidly escalate with increased usage and model complexity. An on-premise deployment, while requiring a higher initial investment (CapEx) for hardware acquisition (GPUs, servers, storage), can offer predictable and potentially lower operational costs in the long term, especially for stable, high-volume workloads. Evaluating these trade-offs is fundamental for CTOs and infrastructure architects.

Future Prospects and Strategic Decisions

The evolution of AI in browsers is just one example of how Large Language Models are permeating every aspect of digital interaction. For organizations, the ability to understand and manage the implications of these technologies is strategic. The decision of where and how to deploy AI capabilities—whether for an internal application, a customer-facing service, or process optimization—requires a thorough analysis of performance, security, compliance, and TCO requirements.

AI-RADAR focuses precisely on these aspects, providing analytical frameworks to evaluate the trade-offs between self-hosted and cloud solutions for LLM workloads. The key is to choose the architecture that best aligns with business objectives, while ensuring control, efficiency, and regulatory compliance.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!