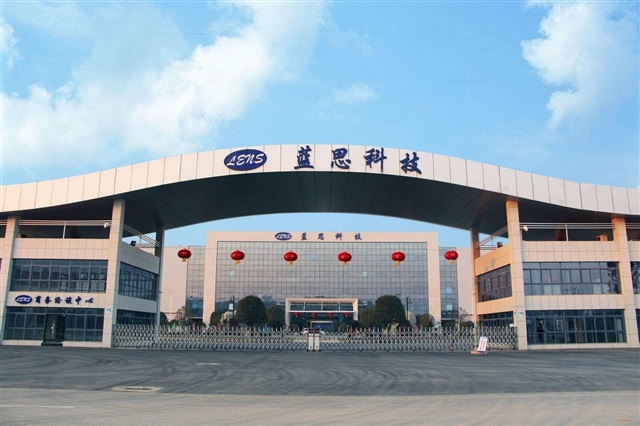

The Challenges of Global Supply Chains: The Lens Technology Case

News of Lens Technology's financial downturn, a key supplier for Apple, has once again put a spotlight on the vulnerabilities and tensions running through global supply chains in the technology sector. This "earnings shock" is not an isolated event but rather a symptom of the complexities and interdependencies that characterize the production of high-tech devices and components. The performance of a single player can have significant repercussions on the entire production pipeline of giants like Apple, highlighting the need for increasingly robust risk mitigation strategies.

The technology sector, particularly that which fuels innovation in LLMs and artificial intelligence, critically depends on the availability of specific hardware. Components such as high-performance GPUs with ample VRAM, advanced memory modules, and specialized processors are the beating heart of AI infrastructures. Supply chain disruptions can therefore translate into production delays, increased costs, and difficulties in meeting demand, directly impacting companies' ability to innovate and scale their solutions.

The Impact on On-Premise LLM Deployments

For organizations evaluating the deployment of Large Language Models on-premise, supply chain dynamics take on strategic importance. The decision to host AI infrastructures locally is often driven by data sovereignty requirements, regulatory compliance, or the desire to maintain total control over the operational environment, including air-gapped environments. However, this choice entails greater reliance on direct hardware procurement. The availability of servers, GPUs (such as A100 or H100 with high VRAM specifications), and high-speed storage solutions becomes a limiting or enabling factor.

Fluctuations in component prices and delivery times can drastically alter the Total Cost of Ownership (TCO) of an on-premise infrastructure. A delay in the delivery of a batch of GPUs can postpone an entire project by months, leading to operational costs and missed opportunities. For those evaluating on-premise deployments, it is crucial to consider not only the initial cost (CapEx) but also supply chain resilience as an integral part of the TCO analysis. AI-RADAR offers analytical frameworks on /llm-onpremise to evaluate these trade-offs, providing tools for informed strategic planning.

Strategies to Mitigate Risks and Ensure Resilience

Facing an increasingly volatile supply chain landscape, companies must adopt proactive strategies to mitigate risks. Supplier diversification is a common tactic, reducing dependence on a single player and increasing flexibility in case of disruptions. Creating buffer stocks for critical components can also offer a cushion against unforeseen delays, although this entails additional storage and capital management costs.

Another emerging strategy is the localization or regionalization of production and procurement. While not always feasible for all high-tech components, reducing the geographical distance between producer and consumer can decrease delivery times and vulnerability to geopolitical events or natural disasters. For AI infrastructures, particularly those intended for sensitive or critical workloads, the ability to ensure a constant flow of reliable hardware is as important as choosing the LLM model or deployment framework.

Future Prospects for AI Infrastructure

The Lens Technology case serves as a warning for the entire technology ecosystem. Supply chain resilience is no longer just a logistical issue but a strategic pillar influencing innovation capacity and competitiveness. For companies investing in Large Language Models and artificial intelligence, infrastructure planning must extend beyond the technical specifications of servers and GPUs, embracing a holistic vision that includes procurement robustness.

Ensuring access to high-performance and reliable hardware is essential to support model evolution and inference efficiency. Whether for on-premise, hybrid, or edge deployments, the ability to anticipate and manage supply chain challenges will be a determining factor for the long-term success of AI strategies. Today's decisions regarding procurement and supplier management will shape the deployment capability and scalability of tomorrow's AI solutions.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!