L'avanzamento di Samsung nella memoria HBM4

Il panorama dell'intelligenza artificiale generativa è in rapida evoluzione, e con esso cresce la domanda di hardware sempre più performante. In questo contesto, la memoria HBM (High Bandwidth Memory) rappresenta un pilastro fondamentale per gli acceleratori AI, in particolare per le GPU destinate all'inference e al training di Large Language Models. Samsung, uno dei principali attori nel settore dei semiconduttori, ha annunciato un significativo miglioramento nella resa produttiva della sua memoria HBM4.

Questo progresso è di cruciale importanza per l'intera filiera tecnicica. Una maggiore resa significa una maggiore disponibilità di questi componenti critici, che a sua volta può influenzare la produzione di GPU di fascia alta e, in ultima analisi, la capacità delle aziende di implementare soluzioni AI robuste e scalabili. La memoria HBM4 è progettata per offrire una larghezza di banda superiore rispetto alle generazioni precedenti, un requisito indispensabile per gestire i dataset massivi e i modelli complessi tipici degli LLM.

Dettagli tecnici e l'elogio di Nvidia

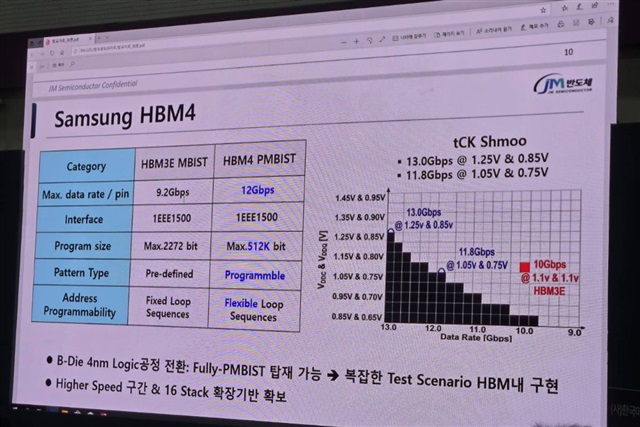

Oltre ai progressi nella resa HBM4, Samsung ha introdotto un aggiornamento al suo processo produttivo a 4 nanometri, che include una tecnicia PMBIST (Process Monitor Built-In Self Test). Questa innovazione è stata accolta con favore da Nvidia, un leader indiscusso nel mercato delle GPU per l'AI. L'apprezzamento di un attore come Nvidia sottolinea l'importanza di queste migliorie non solo in termini di volume produttivo, ma anche di qualità e affidabilità dei componenti.

Il processo a 4 nanometri consente di integrare un numero maggiore di transistor in uno spazio ridotto, migliorando l'efficienza e le prestazioni dei chip. Il PMBIST, d'altra parte, è una funzionalità integrata che permette di monitorare e testare il processo di fabbricazione direttamente sul silicio, garantendo una maggiore affidabilità e riducendo i difetti. Per le aziende che valutano deployment on-premise di LLM, la stabilità e la longevità dell'hardware sono fattori chiave che impattano direttamente il TCO e la continuità operativa.

Implicazioni per i deployment on-premise e il TCO

L'ottimizzazione della produzione di HBM4 e l'affidabilità dei processi a 4 nanometri hanno ricadute dirette per le strategie di deployment AI, in particolare per quelle che privilegiano l'infrastruttura self-hosted. La disponibilità di memoria ad alta larghezza di banda è un fattore limitante per le prestazioni delle GPU, e un collo di bottiglia nella fornitura può ritardare l'espansione delle capacità di calcolo AI. Un miglioramento della resa HBM4 può quindi accelerare la disponibilità di acceleratori AI di nuova generazione.

Per CTO e architetti di infrastruttura, la scelta di deployment on-premise è spesso guidata dalla necessità di sovranità dei dati, compliance normativa e un controllo granulare sull'ambiente. In questo scenario, l'affidabilità e la performance dell'hardware sono cruciali. Miglioramenti nella qualità e nella resa produttiva dei componenti come l'HBM4 contribuiscono a ridurre i costi di manutenzione e a ottimizzare il TCO complessivo, rendendo i deployment locali più competitivi rispetto alle alternative cloud.

Prospettive future per l'hardware AI

Gli sviluppi annunciati da Samsung evidenziano la continua corsa all'innovazione nel settore dell'hardware AI. La domanda di capacità di calcolo per LLM e altre applicazioni di intelligenza artificiale non accenna a diminuire, spingendo i produttori di semiconduttori a investire massicciamente in ricerca e sviluppo. La memoria HBM4, con le sue promesse di maggiore larghezza di banda e capacità, sarà un elemento distintivo delle future generazioni di acceleratori.

Per le aziende che si trovano a dover prendere decisioni strategiche sull'infrastruttura AI, monitorare questi progressi è essenziale. La capacità di sfruttare al meglio le nuove tecnicie, bilanciando performance, costi e requisiti di sicurezza, determinerà il successo dei loro progetti AI. L'impegno di produttori come Samsung nel migliorare la qualità e la disponibilità di componenti critici come l'HBM4 è un segnale positivo per l'intero ecosistema, specialmente per chi punta su soluzioni robuste e controllate in ambienti self-hosted.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!