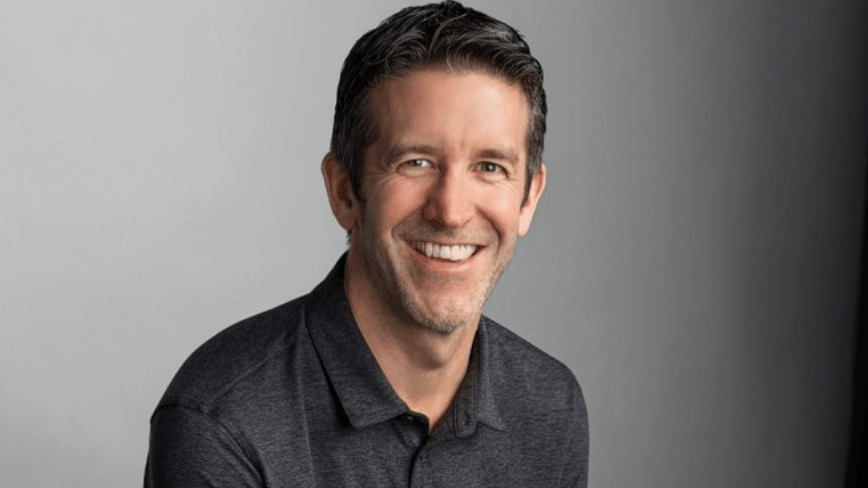

John Ternus al timone di Apple: la sfida dell'AI

Dal 1° settembre, John Ternus assumerà la carica di CEO di Apple, portando con sé un'esperienza ventennale nel settore hardware dell'azienda. Ingegnere meccanico di 50 anni, Ternus ha giocato un ruolo cruciale in momenti chiave per Apple, contribuendo a invertire un periodo di calo nella qualità dei prodotti e promuovendo attivamente la creazione di iPadOS. La sua leadership è stata fondamentale anche per la transizione ad Apple Silicio, un'iniziativa che ha ridefinito le capacità prestazionali e l'efficienza energetica dei dispositivi del colosso di Cupertino.

Con il controllo su linee di prodotto che generano circa l'80% dei ricavi totali di Apple, Ternus si trova ora di fronte a una delle sfide più significative del panorama tecnicico attuale: l'intelligenza artificiale. La sua capacità di risolvere problemi in modo sistemico, piuttosto che cercare colpevoli, sarà messa alla prova in un settore in rapida evoluzione, dove l'integrazione dell'AI non è solo una questione software, ma richiede una profonda comprensione e innovazione a livello hardware.

L'eredità di Apple Silicio e le implicazioni per l'AI

La transizione ad Apple Silicio ha dimostrato la capacità di Apple di progettare e ottimizzare il proprio silicio per esigenze specifiche, un modello che potrebbe rivelarsi cruciale nell'era dell'AI. Lo sviluppo di Large Language Models (LLM) e altri carichi di lavoro di intelligenza artificiale pone requisiti stringenti sull'hardware, in particolare per quanto riguarda la VRAM, la potenza di calcolo per l'inference e il throughput. L'ottimizzazione del silicio per queste operazioni è fondamentale per garantire prestazioni elevate e consumi energetici contenuti, aspetti critici sia per i dispositivi edge che per le infrastrutture di data center.

Per le aziende che valutano il deployment di LLM, la scelta tra soluzioni cloud e on-premise è spesso dettata da considerazioni legate alle specifiche hardware. Un'infrastruttura on-premise, ad esempio, richiede un'attenta pianificazione delle GPU, della memoria dedicata (come la VRAM delle schede NVIDIA A100 o H100) e delle capacità di networking. La capacità di Apple di controllare l'intero stack hardware-software potrebbe offrire un vantaggio competitivo nell'integrazione dell'AI, ma le sfide legate alla scalabilità e all'efficienza dei modelli restano complesse.

Sovranità dei dati e controllo on-premise nell'era AI

La gestione dell'AI, specialmente con LLM, solleva questioni importanti relative alla sovranità dei dati e alla compliance normativa. Molte organizzazioni, in particolare nei settori regolamentati, preferiscono mantenere il controllo completo sui propri dati e modelli, optando per deployment self-hosted o air-gapped. Questa scelta implica la necessità di investire in infrastrutture bare metal robuste, capaci di supportare carichi di lavoro intensivi di training e inference AI.

Il Total Cost of Ownership (TCO) di un deployment on-premise per l'AI è un fattore chiave. Sebbene l'investimento iniziale in hardware possa essere significativo, i costi operativi a lungo termine, inclusi quelli energetici e di licenza, possono variare notevolmente rispetto alle soluzioni basate su cloud. Per chi valuta deployment on-premise, AI-RADAR offre framework analitici su /llm-onpremise per valutare i trade-off tra costi, performance e requisiti di sicurezza, fornendo strumenti per decisioni informate senza raccomandazioni dirette.

Prospettive future per l'AI e l'innovazione hardware

La sfida di "capire l'AI" per un leader come John Ternus non riguarda solo l'integrazione di funzionalità smart nei prodotti esistenti, ma anche la definizione della prossima generazione di hardware ottimizzato per l'intelligenza artificiale. Questo potrebbe significare ulteriori innovazioni nel design del silicio, con un focus su acceleratori dedicati all'AI e architetture di memoria più efficienti per gestire modelli sempre più grandi e complessi. La capacità di Apple di innovare a livello di chip, come già dimostrato con Apple Silicio, sarà un fattore determinante nel suo posizionamento nel panorama AI.

L'industria nel suo complesso sta affrontando la necessità di bilanciare potenza di calcolo, efficienza energetica e costi per rendere l'AI accessibile e scalabile. Le decisioni strategiche di aziende come Apple in questo ambito avranno un impatto significativo non solo sui loro prodotti, ma anche sull'intero ecosistema tecnicico, influenzando lo sviluppo di nuovi standard hardware e software per l'AI on-premise e distribuita.

💬 Commenti (0)

🔒 Accedi o registrati per commentare gli articoli.

Nessun commento ancora. Sii il primo a commentare!