Introduction

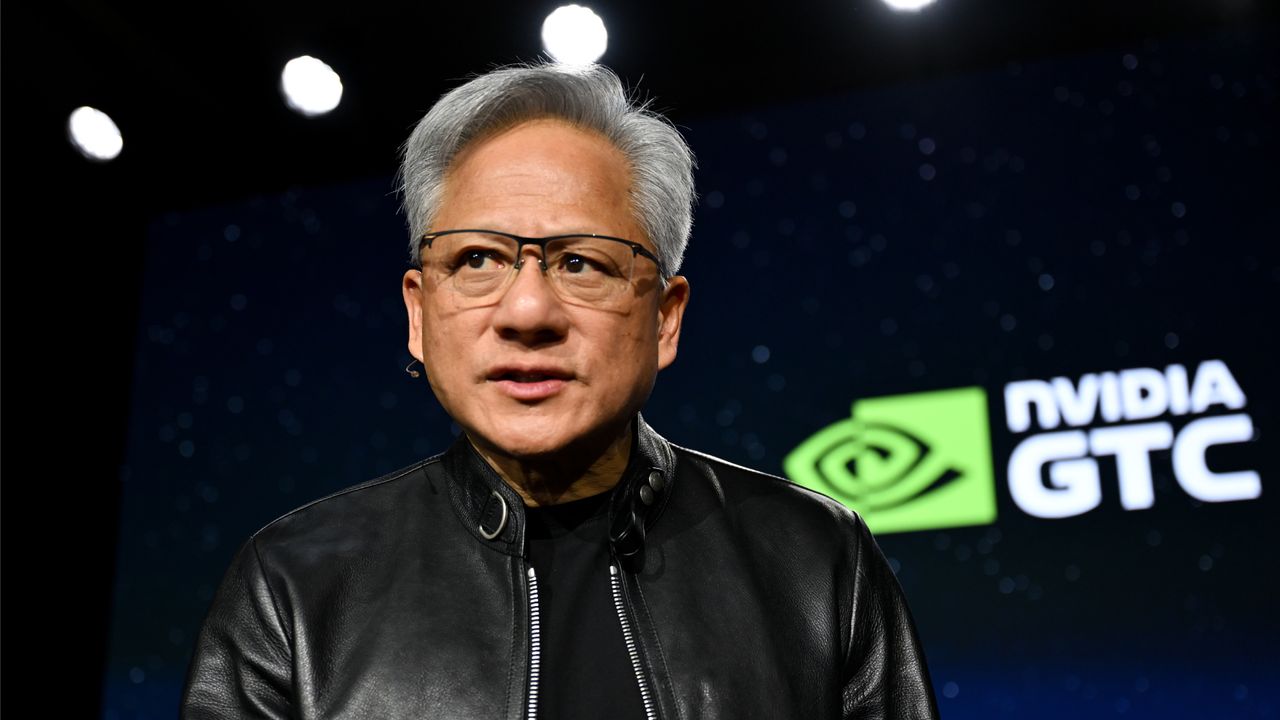

Jensen Huang, CEO of Nvidia, finds himself at the center of a debate that extends far beyond market dynamics, touching upon geopolitics and technological sovereignty. His recent reaction, almost of annoyance, when questioned about selling chips to China, highlights the complexity and sensitivity of the decisions that leading companies in the silicio sector must face. In an era where LLMs and artificial intelligence define the future of innovation, access to high-performance computing hardware becomes a strategic factor of primary importance for nations and businesses alike.

Nvidia's position as the undisputed leader in producing GPUs essential for LLM training and inference makes it a key player in this global chessboard. Its choices not only affect company balance sheets but also have direct repercussions on the innovation capacity and competitiveness of entire economies, especially for those intending to develop and deploy AI solutions in self-hosted environments.

Geopolitical Context and Hardware Implications

Tensions between major global powers have led to restrictions on the export of advanced technologies, particularly semiconductors. This scenario creates an uncertain environment for companies that rely on these critical components for their AI deployments. The availability of computing accelerators, such as high-VRAM GPUs, is fundamental for managing the intensive workloads typical of LLMs, both during training and inference phases.

For organizations evaluating an on-premise deployment, supply chain stability and certainty of access to specific hardware are crucial aspects. Limitations can translate into higher costs, delays in deliveries, or, in the worst case, the inability to procure the necessary silicio to build a robust and scalable AI infrastructure. This directly impacts the overall TCO and long-term infrastructure planning.

Data Sovereignty and Deployment Strategies

The issue of chip supply is closely intertwined with themes of data sovereignty and compliance. Many companies, particularly in regulated sectors, choose self-hosted or air-gapped deployments to maintain full control over their data and comply with stringent regulations. In these contexts, reliance on external suppliers for critical hardware, and potential disruptions due to geopolitical factors, can represent a significant risk.

A company's ability to build and maintain its on-premise AI infrastructure is directly proportional to its ability to access cutting-edge hardware components. Strategic decisions by companies like Nvidia, influenced by governmental pressures, can therefore have a profound impact on system architectures and choices between cloud and self-hosted solutions. For those evaluating on-premise deployments, complex trade-offs exist between flexibility, cost, and control, which must be carefully analyzed, as explored in the analytical frameworks offered by AI-RADAR on /llm-onpremise.

Future Outlook and Resilience

Jensen Huang's reaction underscores not only the pressure on the industry but also the awareness of the strategic importance of the products Nvidia supplies. Looking ahead, CTOs, DevOps leads, and infrastructure architects will need to integrate into their AI deployment strategies not only the technical specifications of hardware but also geopolitical dynamics and supply chain risks.

AI infrastructure resilience will increasingly depend on the ability to anticipate and mitigate these uncertainties. This could mean diversifying suppliers, exploring alternative hardware architectures, or investing in internal production capabilities, where possible. The discussion about selling chips to China is just one example of how global decisions can have a direct and tangible impact on technological innovation strategies at the enterprise level.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!