The Evolution of TSMC's CoWoS Packaging

TSMC, a global leader in semiconductor manufacturing, recently outlined the roadmap for its advanced CoWoS (Chip-on-Wafer-on-Substrate) packaging technology. This innovation is fundamental for the high-performance chip industry, particularly for processors intended for artificial intelligence workloads. The announcement highlights an ambitious development path, with significant evolutions projected by 2029.

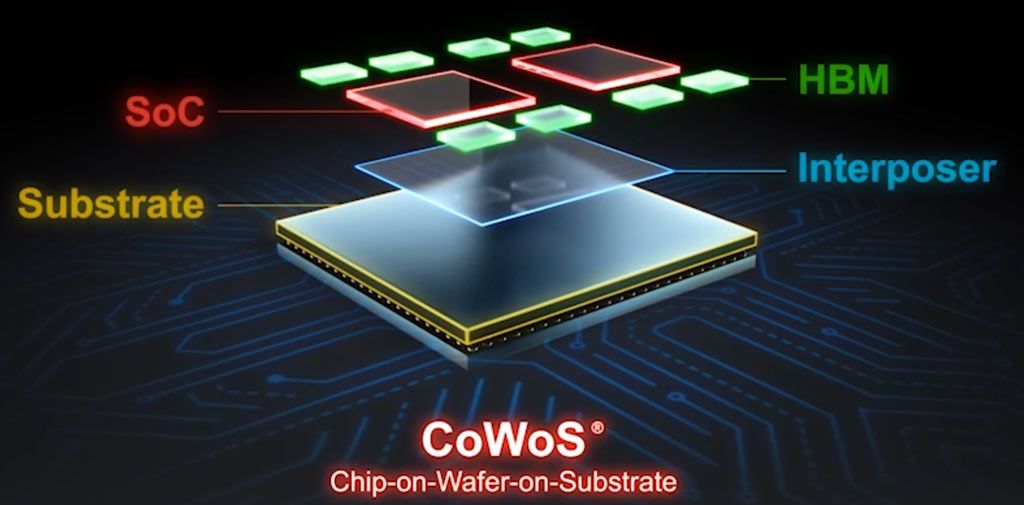

CoWoS packaging is a crucial technology that allows for the integration of multiple chips, such as GPUs and HBM (High Bandwidth Memory), onto a single substrate. This reduces interconnection distances and maximizes efficiency. Such integration is vital for overcoming the physical limitations of monolithic chips and for meeting the growing demand for compute power and memory bandwidth required by Large Language Models (LLMs) and other intensive AI applications.

Technical Details: Power and Memory for AI

TSMC's roadmap anticipates the introduction of packages that will exceed 14 reticles. A reticle is a standard unit of measurement in the semiconductor industry, and an increase in this size indicates a significantly higher integration capacity, allowing for more components or larger components within the same package. This dimensional expansion is directly related to the overall processing capability.

Projections indicate a 48x leap in compute power, an increase that could redefine the capabilities of future AI accelerators. This boost will be supported by the ability to integrate up to 24 HBM5E stacks. HBM memory is essential for AI workloads, offering significantly higher bandwidth compared to traditional memory. The adoption of 24 HBM5E stacks, an advanced version of HBM technology, will ensure a further substantial increase in memory bandwidth, a critical element for powering increasingly large and complex LLMs that require rapid access to vast amounts of data.

Implications for On-Premise Deployments

These advancements in packaging have profound implications for organizations evaluating on-premise or self-hosted AI deployments. The increased compute density and memory bandwidth offered by future CoWoS packages will enable the construction of more powerful and efficient local AI infrastructures. For CTOs, DevOps leads, and infrastructure architects, this means the ability to manage complex AI workloads, including training and inference of large LLMs, directly within their own data centers.

Integrating more compute power and memory into a single package is key to optimizing the Total Cost of Ownership (TCO) of on-premise infrastructures. Higher density reduces physical footprint, power, and cooling requirements per compute unit, and potentially long-term operational costs. Furthermore, for companies with stringent data sovereignty requirements, compliance needs, or the necessity for air-gapped environments, advanced hardware enabling high performance on-premise is an indispensable strategic component. For those evaluating on-premise deployments, analytical frameworks are available at /llm-onpremise to assess the trade-offs between self-hosted and cloud solutions.

Future Outlook and Trade-offs

TSMC's CoWoS technology evolution underscores the direction the semiconductor industry is taking to support the exponential growth of AI. The ability to integrate an ever-increasing number of transistors and memory modules into a single package is key to unlocking new frontiers in compute power.

However, these innovations also come with trade-offs. The manufacturing complexity and associated costs of advanced packaging may increase. Furthermore, the thermal management of such dense and powerful packages will require increasingly sophisticated cooling solutions within data centers. Companies will need to balance performance demands with considerations of cost, energy efficiency, and operational complexity, choosing architectures that best fit their specific constraints and strategic objectives.

💬 Comments (0)

🔒 Log in or register to comment on articles.

No comments yet. Be the first to comment!